The High Performance Internet Platform 648610325 Guide presents a structured blueprint for resilient, low-latency systems. It centers on a clear, measurable goal and practical milestones. Core patterns emphasize asynchronous microservices, edge caching, and locality. The guide also covers throughput tactics, capacity planning, and proactive resilience measures, including balking and incident response. It offers concrete paths for graceful degradation and rapid rollback, inviting the reader to pursue the next concrete step and its implications.

How to Define a High-Performance Platform Goal

Defining a high-performance platform goal establishes the central objective and scope for subsequent work. The statement clarifies intended outcomes, acceptance criteria, and measurable milestones. It emphasizes balance between resilience and innovation, guiding teams toward consistent delivery.

Key considerations include high availability and capacity planning, ensuring scalable performance, predictable reliability, and resource awareness across workloads without constraining creative solutioning.

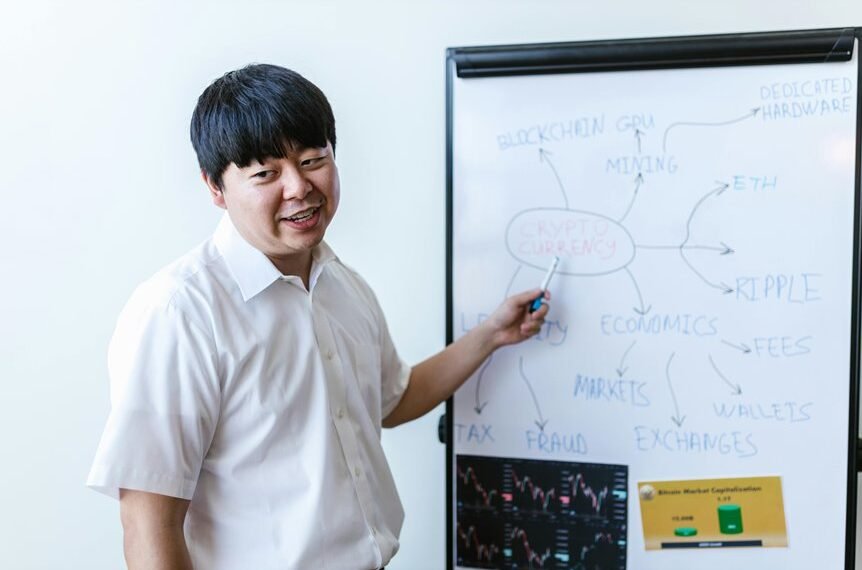

Core Architecture Patterns for Low Latency

Low-latency systems rely on carefully chosen architectural patterns that minimize processing delay, optimize data paths, and reduce contention. Core architectures emphasize latency aware components, asynchronous messaging, and deterministic processing stages. Patterns include event-driven services, microservices with bounded context, and edge caching strategies. Emphasis remains on locality, predictable end-to-end timing, and minimal coordination, enabling responsive, scalable, and resilient platforms.

Practical Optimization Tactics for Throughput

In practical terms, throughput optimization builds on the latency-aware foundations of core architectures by focusing on maximizing sustained data processing rates without compromising responsiveness.

The approach identifies throughput bottlenecks, prioritizes parallelism, and streamlines data paths.

Scalable caching is leveraged to reduce recomputation and access latency, while workload-aware batching and backpressure management sustain high rates without sacrificing resilience or clarity.

Measuring, Balking, and Resilience: From Metrics to Incident Response

Measuring, balking, and resilience integrate metrics, incident response, and system behavior to ensure predictable performance under pressure. The section connects observable latency budgeting and congestion signals to action, detailing measurement granularity, alert thresholds, and incident playbooks. It describes traffic shaping as a proactive control, aligning capacity with demand, and outlines decision criteria for graceful degradation, rapid rollback, and postmortem learning.

Conclusion

In this guide, the goal grips greatness: a granular, gossamer blueprint for a globally growing, high-performance platform. Patterns pulsing with asynchronous, edge-centric playbooks promote persistent low latency and prodigious throughput. Practical, pragmatic tactics, paired with precise measurement, ensure resilient rollback and rapid response. Balking bravely blends with baseline benchmarks, birthing balanced capacity planning. Clear, concise postmortems propel continuous improvement, while graceful degradation preserves user trust. Ultimately, unwavering you-are-ready optimism underpins an ever-evolving, exceptional system.