The Reliable Online Platform 693117 Guide outlines a framework focused on security, uptime, and transparency. It emphasizes reproducible assessments, measurable criteria, and clear governance summaries to enable objective comparisons. Real-world benchmarks reveal gaps between claims and performance, guiding improvements through audit trails and risk transparency. The guide advocates standardized testing and user controls, promoting trust without hype. It sets practical steps toward accountable benchmarking, inviting careful evaluation as platforms evolve and stakes grow.

What Reliable Online Platform 693117 Means for You

The term encapsulates a framework of dependable digital infrastructure, transparent governance, and user-centric safeguards designed to foster trust and efficiency. It clarifies how reputable networks operate, enabling consistent access and predictable performance. For users, it highlights choices rooted in accountability and openness.

Reliable platforms promote platform transparency, reducing ambiguity and empowering informed decisions within a flexible, freedom-oriented digital landscape.

Core Criteria: Security, Uptime, and Transparency

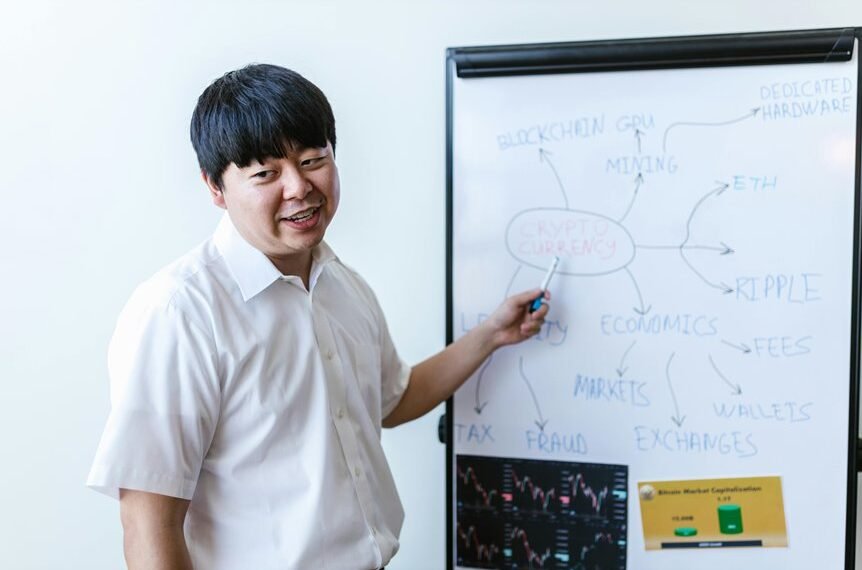

Security, uptime, and transparency form the trio of core criteria for reliable online platforms, serving as measurable pillars that underwrite trust and performance.

The analysis emphasizes security metrics and uptime guarantees as foundational indicators.

It treats governance, incident response, and data handling as objective inputs, enabling stakeholders to assess resilience, accountability, and continuity without bias, tailoring evaluations to freedom-loving users seeking dependable digital ecosystems.

How to Compare Platforms: A Practical Evaluation Framework

A practical evaluation framework builds on the established core criteria by providing a systematic method to compare platforms. It emphasizes measurable indicators, transparent scoring, and reproducible assessments rather than impressions. Criteria include data privacy and user trust, governance summaries, and risk exposure. Analysts detach biases, document assumptions, and compare this across scenarios. The result supports informed, freedom-oriented choices without hype or ambiguity.

Real-World Benchmarks and Next Steps for Trustworthy Choices

Real-World benchmarks illuminate how platforms perform under authentic use, revealing discrepancies between claimed capabilities and actual behavior. The analysis focuses on measurable outcomes, stability, and security, guiding future enhancements. Trust signals emerge as indicators of reliability, while audit trails provide verifiable accountability. Next steps emphasize standardized testing, transparent reporting, and user-centric controls to ensure freedom through demonstrable trust and governance.

Conclusion

The Reliable Online Platform 693117 guide distills security, uptime, and transparency into measurable, reproducible criteria. It frames a practical framework for objective comparisons, demanding audit trails and transparent governance. Real-world benchmarks reveal gaps between claims and performance, prompting accountability and continuous improvement. In this landscape, platforms must align assurances with verifiable data, lest trust erode like a clock losing its bearings. A disciplined, evidence-driven approach remains the compass for trustworthy digital infrastructure and user-centric governance.